No edit summary |

No edit summary |

||

| Line 14: | Line 14: | ||

The patch was developed in the process of building an interactive installation experimenting with the extension of language in the combination of sound and language. | The patch was developed in the process of building an interactive installation experimenting with the extension of language in the combination of sound and language. | ||

I realized the Prototype - compo - which provides an instrument to create future interactive compositions between words and music. It is documented over here: https://wwws.uni-weimar.de/kunst-und-gestaltung/wiki/GMU:Artists_Lab_IV/Leon_Goltermann | I realized the Prototype - compo - which provides an instrument to create future interactive compositions between words and music. | ||

It is documented over here: https://wwws.uni-weimar.de/kunst-und-gestaltung/wiki/GMU:Artists_Lab_IV/Leon_Goltermann | |||

Revision as of 15:12, 14 May 2021

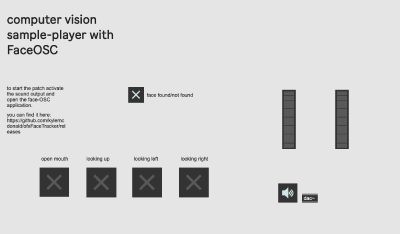

I built a patch that uses computer-vision to control sound-plaback with head-gestures.

Here is the patch and a video that explains how to use it and what you can do with it.

File:facetracking_sampler_leon_g.maxpat

The patch was developed in the process of building an interactive installation experimenting with the extension of language in the combination of sound and language.

I realized the Prototype - compo - which provides an instrument to create future interactive compositions between words and music. It is documented over here: https://wwws.uni-weimar.de/kunst-und-gestaltung/wiki/GMU:Artists_Lab_IV/Leon_Goltermann