No edit summary |

|||

| Line 41: | Line 41: | ||

* Creating Images with jit.matrix [https://www.youtube.com/watch?v=M7VrjJJFQbM&list=PLvpdUWhGx43-OttXG4lp11-UohI3wPeqV&index=22] | * Creating Images with jit.matrix [https://www.youtube.com/watch?v=M7VrjJJFQbM&list=PLvpdUWhGx43-OttXG4lp11-UohI3wPeqV&index=22] | ||

* RGB Glitch Effect [https://www.youtube.com/watch?v=sO5NaTjBvL8] | * RGB Glitch Effect [https://www.youtube.com/watch?v=sO5NaTjBvL8] | ||

---- | ---- | ||

Revision as of 12:09, 10 June 2021

FINAL PROJECT: pulse — distorting live images

10.06.2021 exploring effects

update from the pixel manipulation

I was not so happy with the pixel manipulation. Online, I found an effect that I really like. It’s used for motion tracking. If the object is still, you don't see it. If it moves, you can hardly see the outline. If it moves faster, you can see more and more.

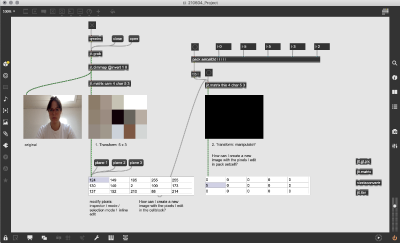

I simply changed the color of the image with jit.unpack and jit.pack. For capturing a snapshot, I used the microphone which is triggered by snapping fingers or clapping hands. Every snapshot should be saved directly, but somehow this didn’t work. You can save it manually right now.

Here is the patch File:210609_Moving Fast.maxpat and some screenshots

04.06.2021 trying to manipulate webcam image by pixels

Please find here the patch: File:210604_Project.maxpat

I can't figure out how to create a new image / video from the edited cellblock (the edited pixels). Do you have a tutorial or references for that? I am wondering if a command which manipulates the whole image - not individual pixels - would be interesting. Probably that's why you recommended jit.gl.pix, but I can't make it work.

Here are some reference videos I found online

20.05.2021 A normal pulse is regular in rhythm and strength. Sometimes it's higher, sometimes lower, depending on the situation and health condition.You rarely see it and feel it from time to time. Apart from the visualisation of an EKG, what could it look like? How can this vital rhythm be visualised?

I would like to use an Arduino pulse sensor to create an interactive installation. The setup contains the pulse sensor and a screen. The user can easily connect to the Max by clipping the Arduino sensor to its finger. The webcam is connected and the screen shows a pulsating and distorted image of the participant. The pulse sensor visually stretches the webcam image, moves in rhythm of the pulse and creates a new result.

A more complex version would be the interaction of two pulses. Two Arduino pulse sensors are connected to MAX and one distorted webcam image is showing the combination of the pulses from two people. This idea could be further developed during the semester.

How does it work: Arduino pulse sensor that measures the pulse with the fingers. Usually, this is used to measure the radial pulse. In MAX, a patch must be created in which the data from Arduino is converted into parameters which distorts the image.

some links: