IFD:EAI SoS21/course material/Session 4: Programming the Classifier Part1: Difference between revisions

| Line 25: | Line 25: | ||

There are a few new elements that we added to our code this week. We added a new class called 'KMeans'. Inside this class will be the guts of our classifier. To be able to successfully classify a new point (a sound), the classifier actually only needs to know the <code>centroid of each class</code> and a way to <code>find the closest centroid</code> to our new point. | There are a few new elements that we added to our code this week. We added a new class called 'KMeans'. Inside this class will be the guts of our classifier. To be able to successfully classify a new point (a sound), the classifier actually only needs to know the <code>centroid of each class</code> and a way to <code>find the closest centroid</code> to our new point. | ||

As a first step the classifier needs to calculate the centroids. To do that we feed it with the following data: | |||

* | * all our given points (=measurements of sounds) | ||

* for every point | * for every point we tell the classifier to which class it belongs (=classLabels) | ||

=Homework= | =Homework= | ||

Revision as of 20:57, 5 May 2021

Solutions to Homework Task 2

Spoiler Alert! Again, accept the challenge and try on your own first! :)

How our classifier works

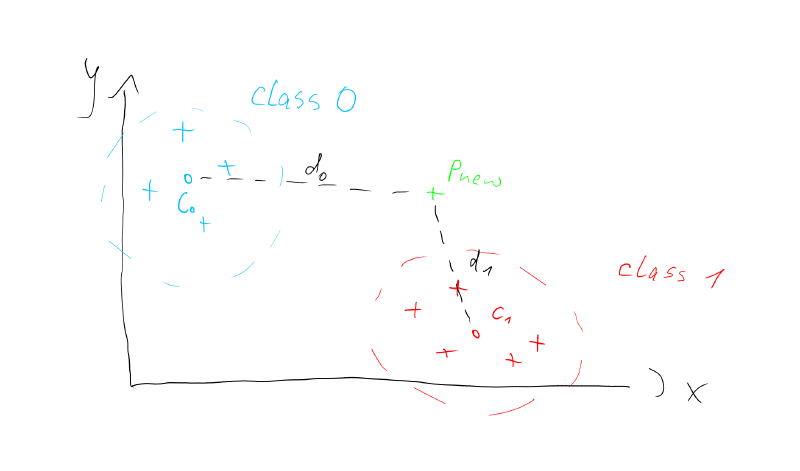

Until now, we have been looking at points on a 2D plane. We know how to calculate the distance between them and how to find the centroid of a point cloud. Let's call these point clouds classes from now on. With this tool set at hand, we can already code a simple classifier. We could e.g. detect whether any given point (P_new) is closer to the point cloud which we call class_0 or class_1. We can find out, by calculating the distances of P_new to the centroids of the classes c_0 abd c_1 as shown in the picture below.

Why would we want to do that?

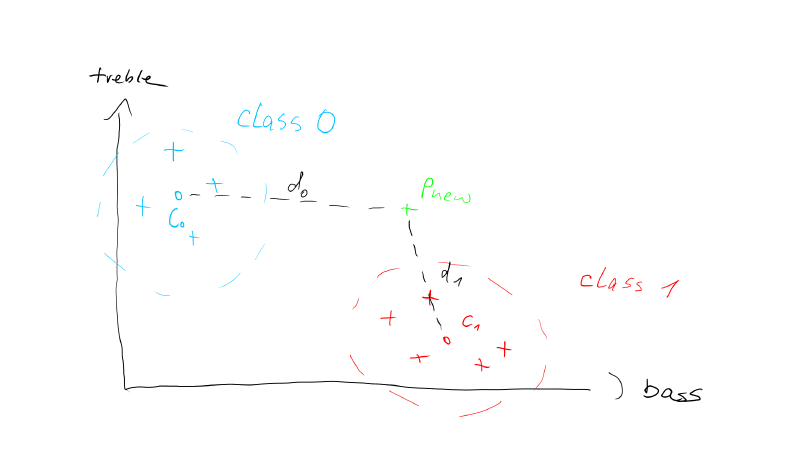

Imagine the points are not just drawings on a piece of paper, but actual measurements of real world objects. So instead of giving the axes in the figure arbitrary names like 'X' and 'Y', we can give them meaningful measures of a short sound recording: 'bass' and 'treble'.

Now we can think of class 1 to represent lower frequency sounds and class 0 to represent sounds containing higher frequencies. Our new point P new basically is a measurement of a new sound that we want to classify. That means we want to know, if it belongs to the low or high frequency sounds.

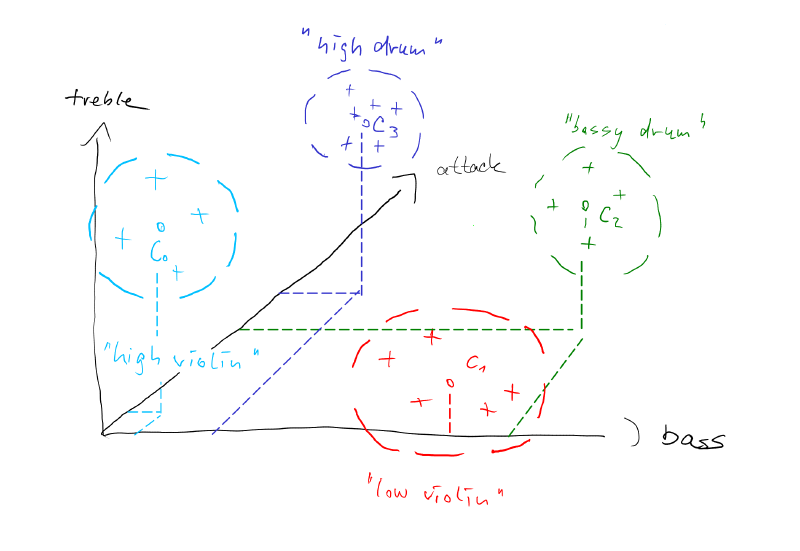

Note that we can extend this concept easily by taking more measurements of our sounds. For example we could measure how sharp the onsets of our sounds are. A drum sound will have a very sharp onset, whereas a violin sound will have a smooth onset. We call this measurement the 'attack' of a sound. Now let's consider we add the attack as a third dimension to out picture. The axis with the attack will point into the screen.

The more attack we have, the sharper the sound. That implies that drum like sounds would be further into the screen and sound with soft onsets, like a soft bowed violin will be closer to us. Not that adding just this one dimension enables us to discern a much greater variety of sounds now.

Description of the classifier code

There are a few new elements that we added to our code this week. We added a new class called 'KMeans'. Inside this class will be the guts of our classifier. To be able to successfully classify a new point (a sound), the classifier actually only needs to know the centroid of each class and a way to find the closest centroid to our new point.

As a first step the classifier needs to calculate the centroids. To do that we feed it with the following data:

- all our given points (=measurements of sounds)

- for every point we tell the classifier to which class it belongs (=classLabels)

Homework

This week your task will be to modify the code from monday's session to work on n-dimensional data points. "N-dimensional" means the number of dimension can be chosen when we instantiate the clusterer. So the same code should work for 2 dimension (as we programmed it already), 3 dimension, 4 dimensions and so on...

Feel free to fork the repl.it and include a new class "PointND":

The Class PointND should should be structured as shown in the following header file:

#ifndef PointN_H

#define PointN_H

#include <vector>

using namespace std;

class PointND {

public:

PointND(int dimensions); // constructor for zero point of n-dimensions

PointND(vector<float> newND); // constructor for a point which copies the coordinates from an n-dimensional vector

PointND operator+(PointND& p2);

PointND operator/(float f);

float getDim(int idx_dimension); // get the component of the indexed dimension, (this was getX() and getY() before)

int size() // return how many dimension our current point has!

{

return _pointND.size();

}

void print();

float getDistance(PointND&); // extend the euclidean distance to n dimensions

private:

vector<float> _pointND;

};

#endifYour task is to:

- Implement a PointND.cpp file that behaves like our class Point2D on n-dimensional points

- Test that class with a 3D distance measurement, and on success

- Modify our clusterer to work with the new PointND class!

Have fun and if you're stuck, write a mail or post a message in the forum!

Best wishes, Clemens