InterFace: How You See Me

InterFace is an interactive tool which uses the facial expressions to detect the emotions and creates an additional layer of communication between the viewer and the wearer. When an emotion is detected on the wearer side, it is translated into a set of colors to be seen by the viewer who is also triggered by the colors that has a relatively universal meaning. It is designed to be used in a public space but rather than being in the center of attention, it aims to exist in the peripheral while still stimulating the people around.

Abstract

The evolutionary development of human resulted in many features to create greater societies from extensive groups of people, ideally living in harmony with each other. One of the most influential features that bring us the ability to build and sustain these social structures is our ability to empathize with the other people around us. However, in modern societies, we are getting more and more apart from each other and lost in the rush of our modern-day problems. Our perception of social interactions gets trapped in a closer circle even though we are encountering many different faces even in one day of our lives. As those faces got blurry for us, the lifting effect of being social and sharing decays even more. The project evaluates the effects of emotions through facial expressions in the contexts of empathy and modern-day social structures.

The process of empathy starts with people imagining themselves in another person’s shoes and trying to form meaning out of it. This involves paying attention to their body language, facial expressions, tone of voice, and words, as well as considering their past experiences and current circumstances. Several experts think that mirror neurons, or at least a similar mechanism, play a role in some forms of basic empathy. Mirror neurons in the mouth and the ability to imitate facial expressions are likely the foundation for being in tune with others emotionally. While the embodiment of emotions does not cover all aspects of empathetic experience, it provides a straightforward explanation of how we may share emotions with others and how this skill could have evolved through evolution (Coudé & Ferrari, 2018).

Moreover, it is a naturally evolved survival mechanism to avoid an unwanted situation with the help of others around. According to the findings of Adams et al(2006), two studies suggest that there is accuracy in detecting movements in angry and fearful faces, either moving towards or away from the observer. They found that observers were quicker to correctly identify angry faces moving towards them, suggesting that anger displays convey the intent to approach. However, the results were not the same for fear faces, which may indicate that fear signals a "freeze" response rather than a behavior of fleeing. Therefore, translating the emotions of one party to another has an essential role in sharing “data” collected from the outer world sensed by human body receptors. Besides the expressions of emotion being a means of non-verbal communication, unlike the gestures that can change from culture to culture, they are also relatively universal. According to Ekman(1970), basic emotions have a pancultural nature in that they are identified and also expressed in similar ways in different cultures with the same facial muscle responses.

The embodiment of emotions through facial expressions is a mean of communication with the outer world. However, it is distinctive from vocal communications etc. by not being self-reflective that people cannot see or feel the immediate effect of their actions. Rather it is moving to the other party to be evaluated and has its effect on them and that is where the reflection forms. So one person feels the emotion but the other one sees the facial expression. The viewer is the bridge to the outer world as well as the reflection of the inside.

To explore the nature of these interactions through facial expressions of emotions in a bigger picture and to disrupt the woven structure of daily life, InterFace pursues to create a space for emphasizing the power of these individual emotions becoming visible and vivid for the outside world.

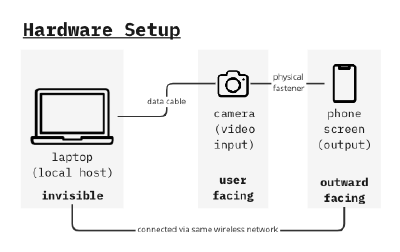

Hardware Setup

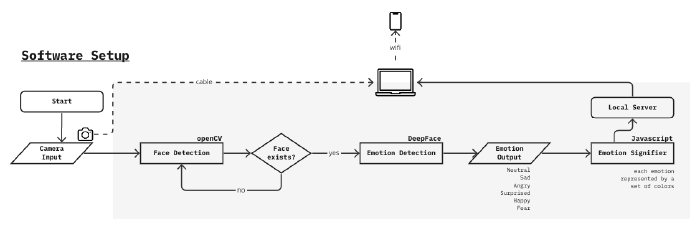

Software Setup

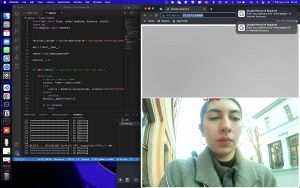

See the detailed development process below

Hardware and Software Systems Processes

Interaction

Video Walk

As an object to be used in the public space, the evaluation of the interactivity is performed with a video walk. The walk starts at the uni campus and by using the most crowded streets aims to reach Theaterplatz and then go back to the campus again. The observations on this route follow as the most interactivity is caught when the wearer was facing the viewer and the distance between them was smaller(as if walking on the same sidewalk to opposite directions). In some instances are seen as some curious ones are also turning their heads to have another look after they pass. Moreover interest in the tool was more visible when the observational video recording is stopped and it became a standalone object.

Emotions on the viewer screen after the video walk

During the walk, emotion output is saved with an automated screenshot script while the laptop in the bag was also remote controlled to ensure the stability of the system. The collected emotion visuals(static images) are blended together with a frame interpolator to create the transitions between them.

Discussions

Even though emotion detection with AI technology has been widely researched and used in a variety of applications, it is essential to consider its drawbacks. One of the main limitations is accuracy. The algorithm used in this project is said to be one of the highest accuracy ones however it still stays at 97%. From my personal experience I can say that it can be used for basic emotions however its power to assess complex or micro emotions is not close to the human capability of understanding emotional expressions. Current emotion detection systems can still struggle to identify emotions accurately due to cultural and individual differences and context. The technology is also prone to algorithmic bias, leading to inaccuracies for certain groups of people. My own experience with the tool is more or less had the same hustles, where the emotion detection was usually tagging me as sad rather than neutral. This kind of algorithm is a black box that makes it harder to understand what goes wrong during the process and it gives a very different result than expected.

On the other hand, face recognition systems also have their own disadvantages. One of them is not being able to recognize a face from another angle, which means the camera needs to be stable enough to see the face horizontally aligned and this feature can limit the possibility of some actions while wearing the device.

References

Ekman, P. (1970). Universal Facial Expressions of Emotions. California Mental Health Research Digest, 8(4), 151-158.

Adams, R. B., Ambady, N., Macrae, C. N., & Kleck, R. E. (2006). Emotional expressions forecast approach-avoidance behavior. *Motivation and Emotion*, *30*(2), 177–186. https://doi.org/10.1007/s11031-006-9020-2

Ferrari, P. F., & Coudé, G. (2018). Mirror neurons, embodied emotions, and empathy. *Neuronal Correlates of Empathy*, 67–77. https://doi.org/10.1016/b978-0-12-805397-3.00006-1

DeepFace https://github.com/serengil/deepface

OpenCV https://opencv.org

early sensor experiments