Mini Electronic Drum Set

A tiny little drum made by clustering and mapping the sounds

Motivation

The idea was to recreate a small and simple version of a drum kit, where the sounds are processed electronically to be mapped to a corresponding drum sounds. The motivation came from the fact that I have recently started to learn to play drums and potentially use this small set to practice drumming at home with headphones on, while not having to disturb anyone (which turned out to be a naive speculation).

Initial Idea

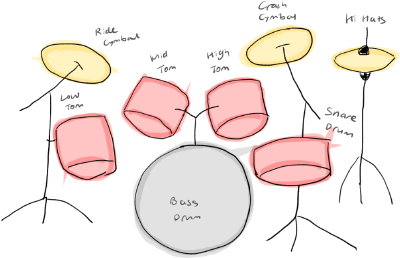

The picture on the right is the initial sketch of the drum set. Each piece is color-coded to its equivalent drum in a set depicted in the left picture; all toms and the snare in red while all cymbals and the hi-hat in yellow. The two blue circles were drawn to represent the tap buttons for pedal movements which manipulates the bass drum and the hi-hat. As shown in the sketch, I planned to recycle the materials that can be found easily. For example, wine corks for toms and the snare, and beer caps for cymbals and the hi-hat, and finally a metal box with a piezo mic attached inside which becomes the platform for all the drums.

Here are the steps how I thought it should work in the beginning. 1. The user taps on the drum. 2. The piezo mic grabs the sound. 3. The program detects the unique frequency of the sound. 4. An equivalent drum sound is produced.

Following challenges immediately arose. - How to ensure the sounds being fed are unique enough to be classified into different patterns? - How to realize pedal movements? - How to allow multiple drums to be detected and played? - How to map the intensity and lasting of each output sound to the input sound? Would the texture of materials matter?

Implementation

I used the timbreID[1], a library for analyzing audio feature in Pure Data(Pd). In particular, its drum kit example already had the function to map the sample drum sounds to the real-time input sounds.

Preparing the Instruments

Before the playback, the timbreID must be trained with sounds that are timbrally different to one another. In the initial setup, there were only 4 classifications available, but I added two more. To produce timbrally different and sounds, I used various materials from beer caps to wine corks as planned. Also to make sharp and short-duration sounds, I decided to use the metal chopsticks to tap with, rather than with fingers which was my original plan. With some experiments, I found the combination that worked somewhat well.

Before the playback, the timbreID must be trained with sounds that are timbrally different to one another. In the initial setup, there were only 4 classifications available, but I added two more. To produce timbrally different and sounds, I used various materials from beer caps to wine corks as planned. Also to make sharp and short-duration sounds, I decided to use the metal chopsticks to tap with, rather than with fingers which was my original plan. With some experiments, I found the combination that worked somewhat well.

Training

File:training-final.mp4

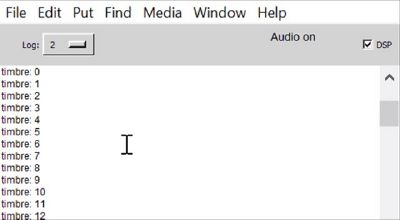

To train, “train” spigot should be turned on. Input sounds are made by tapping on different objects for 5-10 times. Here I am tapping each instrument about 10 times. Each input are shown in the Pd’s window.

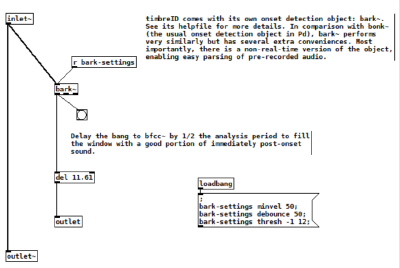

What happens here is that they are detected by ‘bark~’ in the ‘pd onsets’ sub-patch, which measures the amount of growth in bands of an input’s spectrum. Here I lowered the debounce setting to 50 ms for which it will be deaf. It was set as high as 200ms initially to prevent the sample playback to retrigger the playback, but it could be lowered to play in a bit quicker rythme by using the headphones.

What happens here is that they are detected by ‘bark~’ in the ‘pd onsets’ sub-patch, which measures the amount of growth in bands of an input’s spectrum. Here I lowered the debounce setting to 50 ms for which it will be deaf. It was set as high as 200ms initially to prevent the sample playback to retrigger the playback, but it could be lowered to play in a bit quicker rythme by using the headphones.

One more thing to note here is that the master volume should be mute as the feedback sample output will be included in the training input. After training, the ‘train’ spigot should be turned off again.

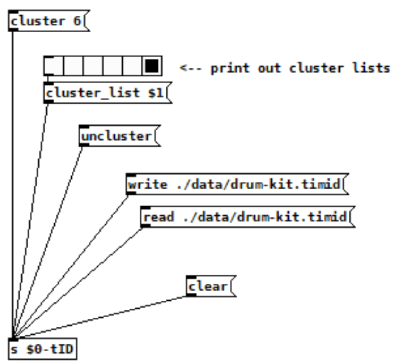

The training dataset can be saved with the ‘write’ message and can be read again with the ‘read’ message. I added additional write and read messages for backup.

One more thing to note here is that the master volume should be mute as the feedback sample output will be included in the training input. After training, the ‘train’ spigot should be turned off again.

The training dataset can be saved with the ‘write’ message and can be read again with the ‘read’ message. I added additional write and read messages for backup.

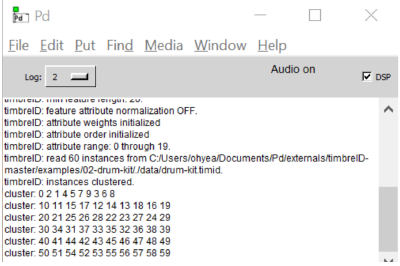

Clustering

After training, the input sounds should be grouped by different timbral sounds with the ‘cluster’ message. Here I changed the number of cluster items from 4 to 6. Each cluster can be printed out in the Pd’s window with the radio buttons connected to the ‘cluster list’. The numbers represent the members based on the input order, starting from 0.

After training, the input sounds should be grouped by different timbral sounds with the ‘cluster’ message. Here I changed the number of cluster items from 4 to 6. Each cluster can be printed out in the Pd’s window with the radio buttons connected to the ‘cluster list’. The numbers represent the members based on the input order, starting from 0.

Playing

Finally you can play the instruments by hitting one by one. This is because there is only one input channel at the moment. Notice how different instrument lead to playback of different sample. Here what happens is that the program tries to find the set of data previously trained that best matches the input sound. When the ‘id’ spigot is turned on, the cluster number will be printed out on the Pd’s window whenever the sample is played back. For the playback, I loaded my own drum samples to fit my mini drum set-up. I got each audio sample from the SampleSwap[2], an audio sample sharing site. Then I put them together into one audio file with even 1s interval, for it to be processed in the Pd.