This page documents my work and experiments done during the "Max and I, Max and Me" course. Feel free to copy, but give attribution where appropriate.

other peoples work to investigate

- F.Z._Aygüler AN EYE TRACKING EXPERIMENT

- Does social distancing change the image of connections between friends, family and strangers? (includes patch walkthrough)

- Paulina Magdalena Chwala (VR, OSC, Unity)

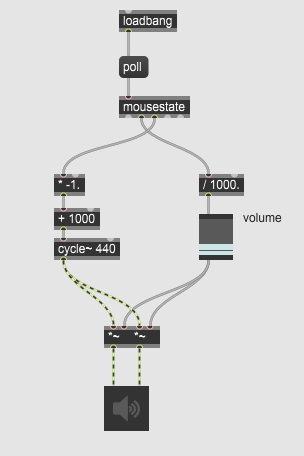

homework 1: theremin

Assignment: Please do your own first patch and upload onto the wiki under your name

I did build a simple Theremin to get to know the workflow with Max. Move your mouse cursor around to control the pitch and volume.

File:simple_mouse_theremin.maxpat

Objects used:

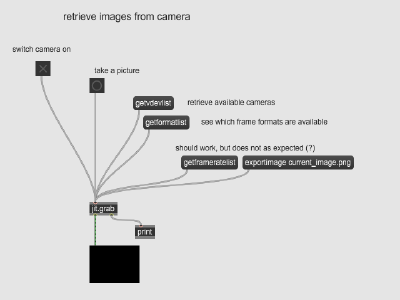

retrieve images from a camera

This simple patch allows you to grab (still) images from the camera.

File:2021-04-23_retrieve_images_from_camera_JS.maxpat

File:2021-04-23_retrieve_images_from_camera_JS.maxpat

Objects used:

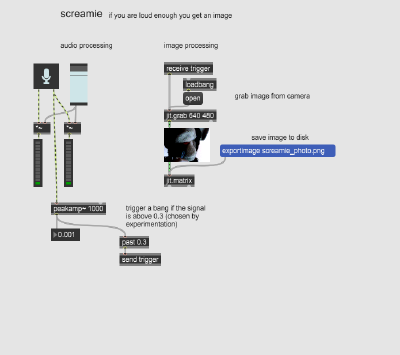

project: screamie

If you scream loud enough ... you will get a selfie.

This little art is a first shot. Also: snarky comment on social media and selfie-culture.

File:2021-04-25_screamie_JS.maxpat

Objects used:

- levelmeter~ - to display the audio signal level

- peakamp~ - find peak in signal amplitude (of the last second)

- past - send a bang when a number is larger then a given value

- jit.grab - to get image from camera

- jit.matrix - to buffer the image and write to disk

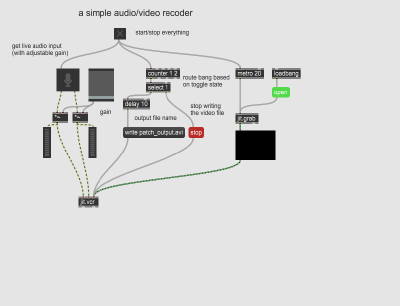

record and save audio and video data

This patch demonstrates how to get audio/video data and how to write it to disk.

File:2021-04-23_audio_video_recorder_JS.maxpat

Objects used:

- ezadc~ - to get audio input

- meter~ - to display (audio) signal levels

- metro - to get a steady pulse of bangs

- counter - to count bangs/toggles

- select - to detect certain values in number stream

- delay - to delay a bang (to get DSP processing a head start)

- jit.grab - to get images from camera

- jit.vcr - to write audio and video data to disk

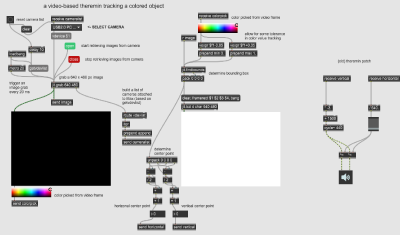

project: color based movement tracker

This movement tracker uses color as a marker for an object to follow. Hold the object in front of the camera an click on it to select an RGB value to track. The object color needs to be distinctly different from the background in order for this simple approach to work. This patch yields both a bounding box and its center point to process further.

The main trick is to overlay the video display area (jit.pwindow) with a color picker (suckah) to get the color. A drop-down menu is added as a convenience function to select the input camera (e.g. an external one vs. the build-in device). The rest is math.

File:2021-04-23_color_based_movement_tracker_JS.maxpat

The following objects are used

- metro - steady pulse to grab images from camera

- jit.grab - actually grab an image from the camera upon a bang

- prepend - put a stored message in front of any input message

- umenu - a drop-down menu with selection

- jit.pwindow - a canvas to display the image from the camera

- suckah - pick a colour from the underlying part of the screen (yields RGBA as 4-tuple of floats (0.0 .. 1.0))

- swatch - display a colour (picked)

- vexpr - for (C-style) math operations with elements in lists

- findbounds - search values in a given range in the video matrix

- jit.lcd - a canvas to draw on

- send & receive - avoid cable mess

homework 2: video theremin

Assignment: Combine your first patch with sound/video conversion/analysis tools

This patch combines the previously developed color-based movement tracker with the first theremin.

final project 1: i see you and you see me

- in collaboration with Luise Krumbein

Video conference calls replaced in-person meetings on a large scale in a short amount of time. Most software tries to mimic a conversation bus actually either forces people into the spotlight and into monologues or off to the side into silence. One to one communication allows a user to concentrate on the (one) other, but group meetings blur the personalities and pigeonhole expression into a cookie-cutter shape of what is technically efficient and socially barely tolerable. The final project in this course seeks to explore different representations in synchronous online group communication.

To shift the perception data will be gathered from multiple (ambient) sources. This means tapping into public data sets for broad context. It also means gathering hyper-local/personal data via an arduino or similar microcontroller (probably ESP8266) for sensing the individual. These data streams will be combined to produce a visual output using jitter. This result aims to represent the other humans in a manner to emphasize personality and not the emulation of the technicality in face to face exchanges.